15.3.8.4 Algorithm (Comparing Fitting Functions)PostFit-CompareFitFunc

OriginPro provides tools to help user find the best model for one dataset.

Following statistics and tests are provided as reference to determine the best fitting model.

F-Test

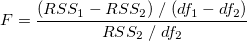

The F-test method assumes that the two models are nested, where one model is a simplified version of the other, such as a second order polynomial and a third order polynomial. The F value is computed as:

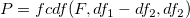

where RSS1 and df1 is the residual sum of square and the degree of freedom of the simpler model. Then we can calculate the probability p by:

If the models are not nested, the F-test results should not be considered. For example, if the models have the same degree of freedom, df1-df2 will be zero and we cannot compute the F value.

Akaike Information Criterion (AIC) Test

On the other hand, the AIC test does not require the two models to be nested. So any two models can be compared using this method.

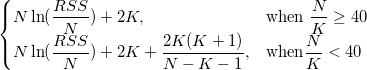

For each model, Origin calculates the AIC value by:

For two fitting models, the one with the smaller AIC value is suggested to be a better model for the dataset.

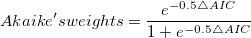

We can also make decisions based on the Akaike's weight value, which can be computed as:

where ∆AIC is the difference between two AIC values. The Akaike's weight indicates the probability of a better model.

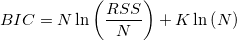

Bayesian Information Criterion (BIC)

Bayesian information criterion is a model selection criterion, which is modified from the AIC criterion.

The penalty term for BIC is similar to AIC equation, but uses a multiplier of ln(n) for k instead of a constant 2 by incorporating the sample size n. That can resolve so called over fitting problem in data fitting.

Adj. R-Square, Residual Sum of Squares and Reduced Chi-Sqr

Adj. R-Square, Residual Sum of Squares and Reduced Chi-Sqr are fitting results provided in the linear and nonlinear curve fitting tools

To learn their definition, refer to the statistics section in the Theory of Nonlinear Curve Fitting page.

To learn how to use them to judge whether the fit is good, refer to the Interpreting Regression Results page.

Reference

- Akaike, Hirotsugu (1974). "A new look at the statistical model identification". IEEE Transactions on Automatic Control19 (6): 716-723

- Burnham, K. R. and D. R. Anderson. 2002. Model Selection and Multimodel Inference. Springer, New York.

|